“Data Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

“Data Driven Thinking” is written by members of the media community and contains fresh ideas on the digital revolution in media.

Today’s column is written by Brian Decker, a managing director at Mindshare, a GroupM agency.

Most people in the digital marketing world wouldn’t credit Albert Einstein as a founding father of digital marketing, but I beg to differ.

The famous quote attributed to Einstein, “Insanity is doing the same thing over and over again and expecting different results,” has been tattooed on my brain as a constant reminder of the critical importance of testing and dynamic optimizations.

For example, I worked on a very effective local campaign last year for a client in the QSR industry. Several months later we replicated the original media plan partners and tactics based on historical performance, but noticed several significant differences. Some of those differences related to the following:

- Geography matters. Media partners performed differently market by market.

- Testing different types of media buys (i.e., CPM versus dynamic CPM versus CPA versus CPC) drove different results.

- National digital media weight had a direct impact on local performance.

- Geotargeting will typically drive stronger DR performance than local online media, including local digital newspapers.

Just because a strategy, tactic or media partner worked last year or even last month doesn’t guarantee the same results. Bottom line is that “complacency just doesn’t work” in dynamic media ecosystems.

Digital media is dynamic and we need to be constantly focused, proactive and fluid as we build effective media investment portfolios that reflect the rapid changes that are happening in social, hyper local, mobile, video, display, multi-cultural and cloud-based platforms. In fact this necessitates a more dynamic approach to targeting, leveraging data, measuring attribution and adaptive or “Darwinian” optimizations.

Darwinian Optimizations

Patience may be considered a virtue in the analog world. In digital, the ability to measure, track and optimize can be the difference between a campaign’s success and failure. For more direct response focused accounts, we typically use a seven-day performance period to evaluate future success.

Within the parameters of testing, failure is always viewed as an opportunity to learn. Therefore it is imperative that “we fail smart and fail quickly.” Unfortunately, media partners who cannot adapt or meet our client performance goals are removed from the media plan and typically from future planning consideration.

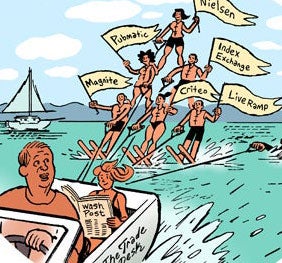

As a result we are always testing two to six new partners against a wide array of tactics every quarter. From a Darwinian perspective, we have taken a “survival of the fittest” approach when testing and only the strongest survive.

In a recent article, AdExchanger referred to this approach of ours as “tough love.” However, this is ultimately not about media. It is only about the client and meeting their business and marketing objectives. While maintaining great relationships with our media partners is exceptionally important, it is ultimately about moving rocks and measuring distance for our clients.

With this approach toward active optimization, there is no room for complacency. Once again the choices we make today may be totally irrelevant tomorrow or the day after. This is based on the rapid consumer adoption of individual and connected devices and emerging touch points. But does this invalidate Einstein’s principles on repetition and really expecting different results? Or is this just the wrong question (relatively speaking)?

Follow Brian Decker (@briandecker6) and AdExchanger (@adexchanger) on Twitter.