“The Sell Sider” is a column written for the sell side of the digital media community.

“The Sell Sider” is a column written for the sell side of the digital media community.

Today’s column is written by Chris Kane, founder at Jounce Media.

Marketers who measure website traffic, online sales, app installs or even offline sales all take a fundamentally similar approach to attribution.

For each conversion event, they capture a user ID and they then look for prior impressions served to that same user ID. There’s lot of fancy math you can put on top of these systems, but they are all underpinned by an assumption that you can track a reasonably persistent user ID over time, linking the conversion event with prior ad exposure.

For some media companies, this system works nicely.

Allrecipes.com, for example, is naturally suited to be measured accurately by ecommerce marketers. The cookie ID associated with an impression on allrecipes.com is the same one that is associated with a subsequent sale on the marketer’s website. Similarly, The Weather Channel app is naturally suited to be measured accurately by app-install marketers. The mobile ad ID associated with the impression in The Weather Channel app is the same one that is associated with a subsequent app install.

But many media companies have attribution blind spots. Their inventory’s true performance is underrepresented in marketer attribution systems because the IDs associated with impressions incorrectly appear to be different from the IDs associate with sales. These attribution blind spots accrue lots of cost, but no conversions.

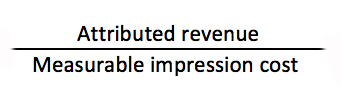

To account for these blind spots, the most accurate measure of a publisher’s ROI considers only the cost of impressions that are eligible for attribution. The simplest version of this math looks something like this:

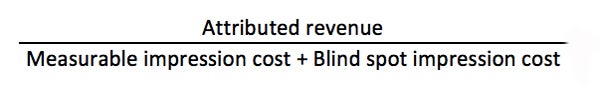

But most attribution systems calculate ROI like this:

The bigger the attribution blind spot, the more this ROI arithmetic incorrectly penalizes the media company. And attribution blind spots vary wildly across media companies – from as low as 1-2% to as high as 95%.

Here are the four most common attribution blind spots I have observed in work with brands:

1. Safari

Lots of ink has already been spilled on Apple’s Intelligent Tracking Prevention program and the possible workarounds for achieving cookie stability in Safari. In spite of the occasional ad tech boast that “we’ve solved Safari,” media companies should assume that advertiser measurement systems do not properly assign post-view conversion credit to impressions served on a Safari browser.

DoubleClick, to its credit, does provide estimated Safari conversions to marketers, and brands who leverage that feature take a smart step toward correcting for attribution blind spots, but most marketers are severely undercounting the value of Safari inventory.

2. Cross-device

This is another source of spilled ink, and another area where DoubleClick is doing good work to help marketers correctly measure the impact of app impressions on website sales or web impressions on app installs. But cross-device reporting isn’t enabled by default in standard DoubleClick attribution reports.

And even when marketers do inspect cross-device conversion paths, DoubleClick’s identity mapping is incomplete, particularly for connected TV inventory. Non-DoubleClick attribution systems that rely on cobbled-together identity graphs have an even more spotty understanding of cross-device conversion paths.

3. Non-disclosed MAIDs

Now here’s one that every media company can fix. App inventory is a bit more complex to measure than web inventory because tracking systems don’t automatically detect the presence of a cookie. Instead, the media company must supply a mobile ad ID (MAID) for each impression.

Without this MAID, the impression is associated with userid=0. User zero accumulates enormous impression volumes and cost, but never converts – a classic attribution blind spot. There may be a few media companies for whom sharing MAIDs creates a meaningful data leakage risk, but these cases should be the exceptions, not the rule.

4. Cookie islands

The most challenging attribution blind spots occur when an ad tech measurement system accesses a cookie jar that is isolated from the user’s primary web browser. These cookie islands live in web views, such as referrals from Twitter, LinkedIn or Pinterest, and desktop apps, including Skype, Spotify or Outlook. The ad tech measurement system records a cookie ID, but that cookie ID never converts. And because there is such scarce user behavior associated with these cookie islands, they rarely get merged into cross-device ID maps.

As I’ve said in previous columns, ad spend doesn’t necessarily flow toward performant inventory – it flows toward measurable inventory. I argued back in 2016 that publishers need to embrace open measurement, and I continue to think that’s necessary. But it’s not sufficient.

Publishers need to take a much closer look at their inventory to identify their attribution blind spots, correct them where possible and educate marketers on how to properly account for the blind spots that remain.

Follow Jounce Media (@jouncemedia) and AdExchanger (@adexchanger) on Twitter.