“The Sell Sider” is a column written for the sell side of the digital media community.

“The Sell Sider” is a column written for the sell side of the digital media community.

Today’s column is written by Alessandro De Zanche, an independent audience strategy consultant.

With each passing quarter, the share of marketer budgets allocated to data grows, and with that growth comes greater scrutiny – rightly so. And yet this scrutiny has led to a growing tendency to “blame the data” when a campaign doesn’t perform as expected.

When that blame is misplaced, publisher reputations are harmed along with advertiser ROI.

The truth is, you can have the best data in the world, and your campaign will still

fail if the data is not leveraged in a coordinated and thoughtful way. Let’s consider some of the errors of interpretation and execution that lead to such failure.

What can go wrong

We’ll start with a segment of “hip-hop lovers” (like all of the below examples, drawn from real experience).

One might assume that individuals in this group are young and into streetwear, but it would still be an assumption. Let’s say that the “hip-hop lovers” segment is built on a foundation of deterministic data from a music source: It indicates music preference, full stop.

If a marketer wanted to test the impact of targeting streetwear products to hip-hop lovers, he or she should keep in mind that the segment in itself has nothing to do with clothing or fashion and that if it didn’t perform it wouldn’t mean that the quality of the data is bad.

Obvious? I could write a book full of anecdotes on the naive interpretation, misuse and eventual misjudgment of audience segments.

Let’s stay on the same “hip-hop lovers” segment, this time for a campaign “on target,” promoting the release of a collection of hip-hop tracks. Now imagine using a display creative with a totally off-mark, cheesy image of “The Hoff” grinning in his underwear. Should we be surprised that users didn’t engage and the campaign didn’t perform?

Frequency capping, anyone?

Next, let’s consider the example of an audience segment of “luxury travelers to the Caribbean.” The segment is built on data coming from travel and airline portals and includes people who last year bought a first-class flight to the Caribbean and/or showed intense interest for those destinations.

Let’s presume our campaign, advertising a luxury holiday package in Cuba with a spot-on creative and call to action, performed poorly.

A common reaction might be that this was a bad segment. But if we look under the hood, we might realize that the frequency capping for this campaign wasn’t set and that users saw the advertising on average 25 times a day. Thus the performance metrics will suffer as the user, bombarded repeatedly with the same message, begins to tune it out and KPI are diluted.

In another similar example, a frequency cap was set but with creative identical to every month since the previous year.

Naughty sales reps

A few years ago I caught a “smart” sales rep who, in a pre-programmatic era, having realized that there was no more inventory for a certain segment he needed for his campaign in the run-up to Christmas, and eager to claim his end-of-year bonus at all costs, randomly applied any data he could find to the campaign, hoping not to get caught. (He didn’t know I am the Rottweiler of data quality gatekeepers.)

Not every product sells

Analysis doesn’t stop with the campaign itself and should also include the advertised product. I once ran an A/B/C test to evaluate the quality of our “cellular phone network intenders” (people in market for a new contract).

In agreement with the client and its agency we compared three groups: an untargeted audience, a demographically targeted one and the above-mentioned intenders segment.

Performance (a click to an order form) was at its highest on the intenders segment, followed by the demographic segment and the untargeted one. However, the client later informed us that conversions (sign-ups) were the opposite: The intenders segment had the lowest conversion rate of the three groups, followed by the demographic target. The group with the most sign-ups was the untargeted one.

This left us puzzled for a couple of days until we discovered the product was far more expensive than its competitors’ equivalent. Our intenders were “subject matter experts” in the market at that specific time for a cellular network contract. They knew tariffs; they compared the advertised offer to similar ones. They simply decided it wasn’t worth it.

Had we not investigated, a superficial review of performance would’ve fingered data quality as the culprit.

Victims of automation

The reality that we often struggle to accept is that automation has not only brought efficiency, it has also dumbed down some aspects of digital advertising. And this has, in some cases, led to a “blame the data” mindset.

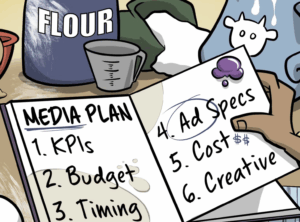

A successful campaign is built on a consistent approach between different phases and teams: product knowledge, choice of tools, planning, implementation, execution and measurement. It requires education, mentoring and a constant flow of communication, both internal and external (i.e., between advertiser and publisher, advertiser and agency, publisher and agency and so on), supported by agnostic ad tech.

Until AI shows further improvements in transparency and proven value to marketers, we must leave room for humans (and common sense) in the evaluation and application of data.

Eventually, engaged audiences thrive when immersed in personalized, authentic and meaningful experiences, which require good quality data and, as important, implemented in the right way and following best practices: Users couldn’t care less about our ad stacks and marketing gimmicks.

Follow Alessandro De Zanche (@fastbreakdgtl) and AdExchanger (@adexchanger) on Twitter.